Quality Management System Software Since Year 2004

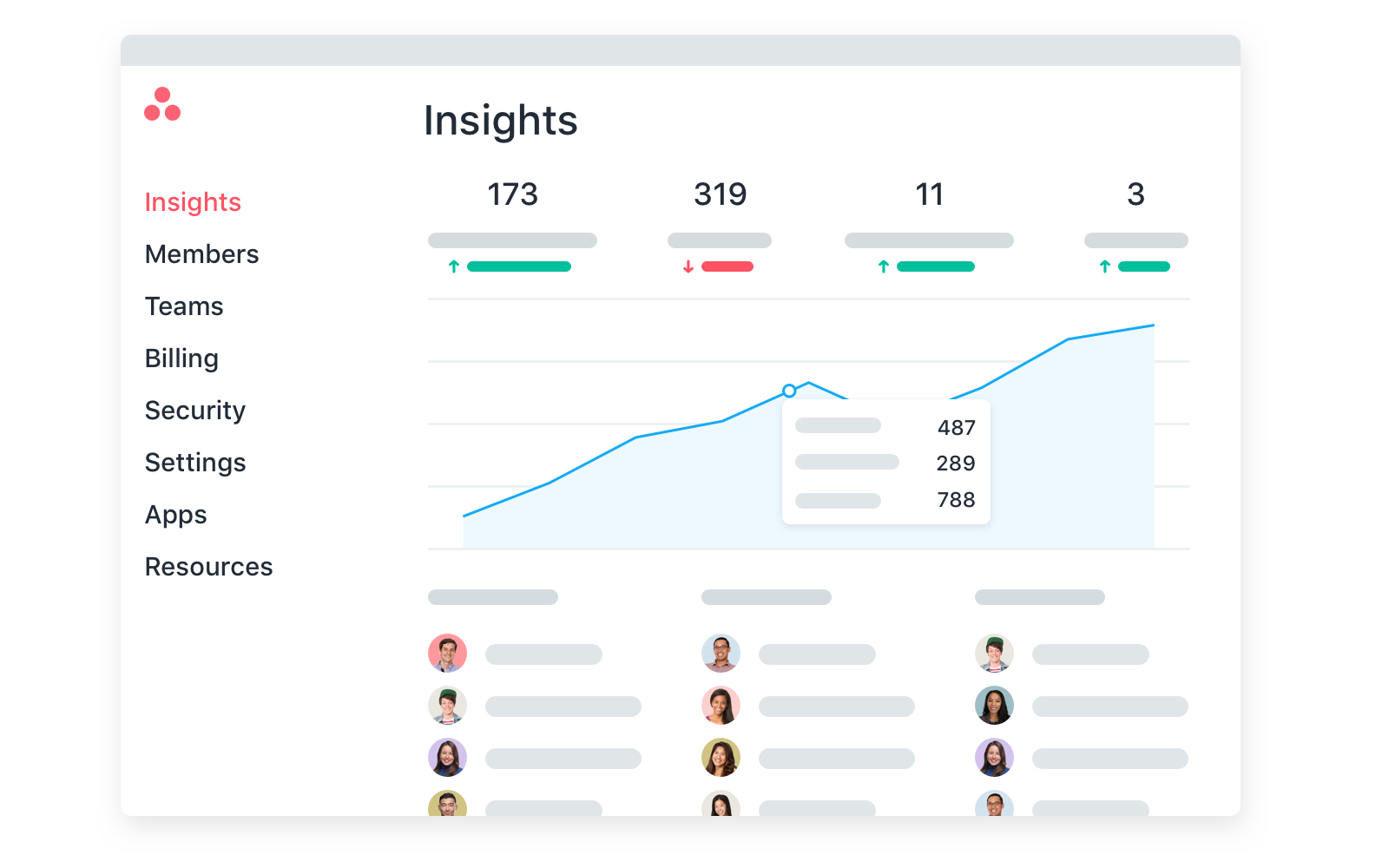

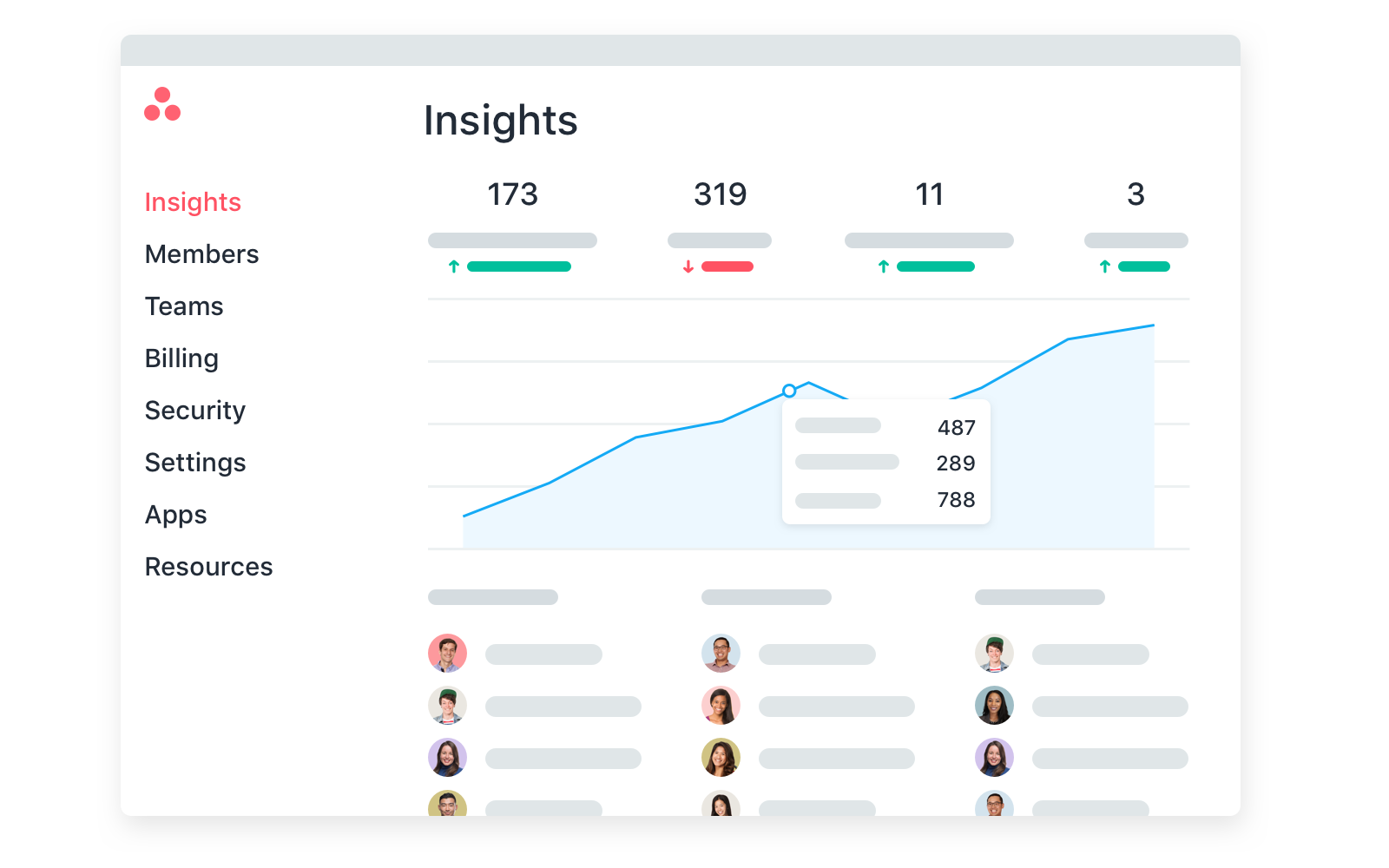

NIIX Quality Management System total solution consists of ISO Document Control software, Audit Schedule & Audit Report software, CAPA software, Supplier Corrective Action Request software, Calibration Management software, Complaint Management software, Quality Objective Management software, Gap Analysis software, and Risk Management software.

Each software is aiming to automate and simplify QMS processes and maintain ISO compliances.

Established since year 1996

Quality Management System solution since year 2004

9 comprehensive Quality Management System software

Over 1000 users worldwide

Futuristic QMS System Software Simplifies Your QMS Implementation

Transforming the way you manage quality management systems towards ISO certification, compliance, and continual improvement.

ISO Document Control Software

Specifically developed according to ISO Document Control requirements. Its automated workflow includes document creation, review and approval, publishing, revise, revision control, obsolete document archiving, document change record, document master list, document cross-reference, notification on pending task, etc.

Audit Schedule & Audit Report Software

Simplifies ISO Audit compliance. Enables organizations to manage entire audit process include planning, execution, approval and reporting of ISO Audit is complies with regulations and compliance requirements.

Corrective and Preventive Actions Software

Provides company to controls the CAPA process right from creation to validation and closure. Identifies and addresses the root causes of quality issues while supporting ISO compliance, lowering risk of business impact and repeat issues, and fostering continuous improvement to achieve QMS objective.

Supplier Corrective Action Request Software

Automates entire SCAR process end-to-end right from initiate a SCAR with critical information, investigate and determine actual root cause, perform risk assessment, identify and implement action plan, perform verification and effectiveness steps and resolve the deficiencies.

Calibration Management Software

Makes it simple to keep track of calibration records and manage process in a cloud-based solution. It offers everything you need to document control, schedule and manage calibration requirement include specific calibration criteria, assign schedule, managed unscheduled or emergency calibration, calibration report and certificate, etc.

Complaint Management Software

Designed to accurately identifies, capture and track all nonconformance, customer complaints, analyse, develop action plans, and use data with analytics insights to correct and prevent reoccurrence. It helps to optimize customer success, satisfaction, and retention.

Quality Objective Management

Simplifies processes to initiate, monitor, measure and improve the quality objective results and performance. It helps to make the Quality Objectives effective in addressing what needs to be improved, which aligned with the S.M.A.R.T (specific, measurable, achievable, realistic and time-based) concept.

Gap Analysis Software

Provides systematic mechanism for organization, especially process improvement teams to assess, analyse the quality gap of current business performance to the long-term goals, identify action QMS action plan, generate comprehensive gap analysis report for improvement.

Risk Management Software

Provides systematic framework to simply the identification, analysis, monitoring, reviewal, approval, treatment, unify all risk-related activities and documents for effective, manageable and consistent risk management.

ISO Document Control Software

Specifically developed according to ISO Document Control requirements. Its automated workflow includes document creation, review and approval, publishing, revise, revision control, obsolete document archiving, document change record, document master list, document cross-reference, notification on pending task, etc.

Audit Schedule & Audit Report Software

Simplifies ISO Audit compliance. Enables organizations to manage entire audit process include planning, execution, approval and reporting of ISO Audit is complies with regulations and compliance requirements.

Corrective and Preventive Action Software

Provides company to controls the CAPA process right from creation to validation and closure. Identifies and addresses the root causes of quality issues while supporting ISO compliance, lowering risk of business impact and repeat issues, and fostering continuous improvement to achieve QMS objective.

Supplier Corrective Action Request Software

Automates entire SCAR process end-to-end right from initiate a SCAR with critical information, investigate and determine actual root cause, perform risk assessment, identify and implement action plan, perform verification and effectiveness steps and resolve the deficiencies.

Calibration Management Software

Makes it simple to keep track of calibration records and manage process in a cloud-based solution. It offers everything you need to document control, schedule and manage calibration requirement include specific calibration criteria, assign schedule, managed unscheduled or emergency calibration, calibration report and certificate, etc.

Complaint Management Software

Designed to accurately identifies, capture and track all nonconformance, customer complaints, analyse, develop action plans, and use data with analytics insights to correct and prevent reoccurrence. It helps to optimize customer success, satisfaction, and retention.

Quality Objective Management Software

Simplifies processes to initiate, monitor, measure and improve the quality objective results and performance. It helps to make the Quality Objectives effective in addressing what needs to be improved, which aligned with the S.M.A.R.T (specific, measurable, achievable, realistic and time-based) concept.

Gap Analysis Software

Provides systematic mechanism for organization, especially process improvement teams to assess, analyse the quality gap of current business performance to the long-term goals, identify action QMS action plan, generate comprehensive gap analysis report for improvement.

Risk Management Software

Provides systematic framework to simply the identification, analysis, monitoring, reviewal, approval, treatment, unify all risk-related activities and documents for effective, manageable and consistent risk management.

Software Built for All Industries Worldwide

NIIX provides a state of the art, futuristic and scalable software platform suit for small and medium size companies, multinational corporations worldwide and government agencies. It is growth ready with your business expands at cost-effective manner.

We’re proud to Serve Industry Leaders

Companies of every size from various sectors and industries rely on NIIX QMS System Software Solutions each day.

Available with 2 Deployment Options

No software installation required at your server.

Software installed by NIIX at your preferred server.

What’s Next?

Request For Demo

Request for demo session at your preferred time to know more about each NIIX QMS Software.

Ask Us Question

We are ready to assist you to make the right choice for ISO effectiveness and efficiency measures.